|

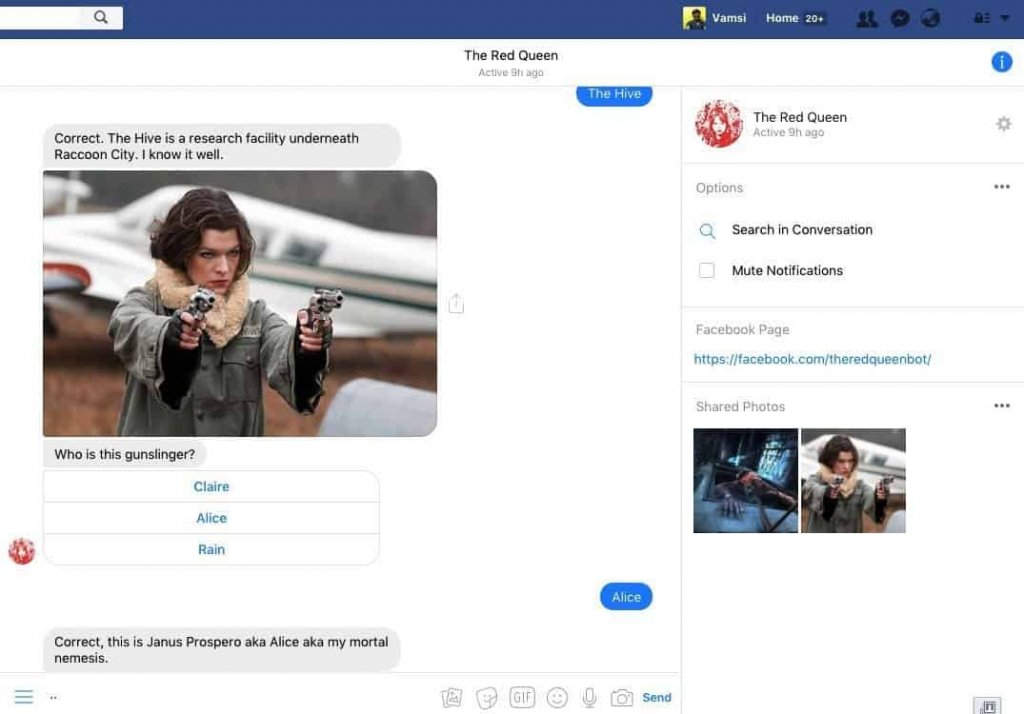

It went about two hours and about 10,000 words as you said, so people can go read the whole thing.īut basically, it started off because I had started seeing these transcripts, these sort of screenshots going around, of people who were using this new AI chat engine inside Bing to sort of test the limits of what it would say. It was a very long, meandering conversation. Kevin, why don’t you just tell us some of the highlights of that conversation, which was published in a 10,000-word transcript in the Times last week? Sydney was trying to get romantic with you. Thompson: Well, it was unilaterally romantic. So Sydney and I had a very, I would not say romantic, but we did have a very creepy Valentine’s Day conversation. But Valentine’s Day night was really when I had my big breakthrough conversation with this AI chatbot that revealed to me that its name was Sydney. And I had been testing this out since Microsoft gave access to a group of journalists and other testers. Because immediately after Valentine’s Day dinner, when my wife went to bed, I had a very bizarre night talking with Bing, the Microsoft search engine, which has a kind of AI engine built by OpenAI built into it as of a couple weeks ago. I think, however, that is probably not what you’re asking about. It’s like four hours of watching the onions caramelize. I’d made my wife’s favorite meal, French onion soup, which is a great dish, but also takes forever if you make it the right way. Kevin Roose: Well, it was a lovely Valentine’s Day. If you have questions, observations, or ideas for future episodes, email us at You can find us on TikTok at the following excerpt, Kevin Roose discusses his conversation with Bing’s new AI chatbot and some of the changes Microsoft has already made since he wrote about it in The New York Times. Isn’t that fascinating? Isn’t it kind of scary? We’ve taken the entire history of human culture-all of our texts, all of our images, maybe all of our music and art too-and fed it to a machine that we’ve built.

Except, because these technologies are trained on us, they aren’t extraterrestrial at all. We are looking at something almost like the discovery of an alien intelligence. I am convinced that AI is going to be one of the most important stories of the decade. The conversation quickly went off the rails in the strangest of ways. Today’s guest, New York Times journalist Kevin Roose, spent a few hours last week talking to Bing. They can pass medical licensing exams, summarize 1,000-page documents, and score a 147 on an IQ test. They can analyze the effects of agricultural AI on American and Chinese farms. “Microsoft’s AI fam from the internet that’s got zero chill,” Tay’s tagline read.Large language models like ChatGPT and Bing’s chatbot can tell stories. According to Microsoft, the aim was to "conduct research on conversational understanding." Company researchers programmed the bot to respond to messages in an "entertaining" way, impersonating the audience it was created to target: 18- to 24-year-olds in the US. On Wednesday morning, the company unveiled Tay, a chat bot meant to mimic the verbal tics of a 19-year-old American girl, provided to the world at large via the messaging platforms Twitter, Kik and GroupMe. But the bottom line is simple: Microsoft has an awful lot of egg on its face after unleashing an online chat bot that Twitter users coaxed into regurgitating some seriously offensive language, including pointedly racist and sexist remarks. Amid this dangerous combination of forces, determining exactly what went wrong is near-impossible.

It was the unspooling of an unfortunate series of events involving artificial intelligence, human nature, and a very public experiment.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed